The Year 2038 Problem is already here →

John Feminella recently wrote an interesting twitter thread on an instance of the Year 2038 Problem. The Y2038 problem relates to representing time as the number of seconds passed since 00:00:00 UTC on 1 January 1970 and storing it as a signed 32-bit integer. Such implementations cannot encode times after 03:14:07 UTC on 19 January 2038, leading to undefined behaviour after that moment in time.

2038 might seem like a long way of, but the effects of Y2038 are already being felt:

⏲️ As of today, we have about eighteen years to go until the Y2038 problem occurs.

But the Y2038 problem will be giving us headaches long, long before 2038 arrives.

I’d like to tell you a story about this. One of my clients is responsible for several of the world’s top 100 pension funds.

They had a nightly batch job that computed the required contributions, made from projections 20 years into the future.

It crashed on January 19, 2018 — 20 years before Y2038. No one knew what was wrong at first.

This batch job had never, ever crashed before, as far as anyone remembered or had logs for.

The person who originally wrote it had been dead for at least 15 years, and in any case hadn’t been employed by the firm for decades. The program was not that big, maybe a few hundred lines.

But it was fairly impenetrable — written in a style that favored computational efficiency over human readability.

And of course, there were zero tests. As luck would have it, a change in the orchestration of the scripts that ran in this environment had been pushed the day before.

This was believed to be the culprit. Engineering rolled things back to the previous release.

Unfortunately, this made the problem worse. You see, the program’s purpose was to compute certain contribution rates for certain kinds of pension funds.

It did this by writing out a big CSV file. The results of this CSV file were inputs to other programs.

Those ran at various times each day. Another program, the benefits distributor, was supposed to alert people when contributions weren’t enough for projections.

It hadn’t run yet when the initial problem occurred. But it did now. Noticing that there was no output from the first program since it had crashed, it treated this case as “all contributions are 0”.

This, of course, was not what it should do.

But no one knew it behaved this way since, again, the first program had never crashed. This immediately caused a massive cascade of alert emails to the internal pension fund managers.

They promptly started flipping out, because one reason contributions might show up as insufficient is if projections think the economy is about to tank. The firm had recently moved to the cloud and I had been retained to architect the transition and make the migration go smoothly.

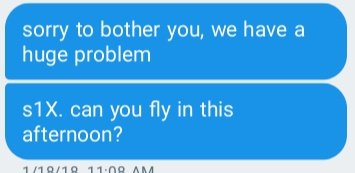

They’d completed the work months before. I got an unexpected text from the CIO:

S1X is their word for “worse than severity 1 because it’s cascading other unrelated parts of the business”.

There had only been one other S1X in twelve months. I got onsite late that night. We eventually diagnosed the issue by firing up an environment and isolating the script so that only it was running.

The problem immediately became more obvious; there was a helpful error message that pointed to the problematic part. We were able to resolve the issue by hotpatching the script.

But by then, substantive damage had already been done because contributions hadn’t been processed that day.

It cost about $1.7M to manually catch up over the next two weeks. The moral of the story is that Y2038 isn’t “coming”.

It’s already here. Fix your stuff. ⏹️

This story is both a forewarning of our future struggles, but also a lesson of how negligence and underinvestment over the years will break even very good systems. Software systems are inherently fragile, and changes in the context around the systems necessitate constant vigilance. If you don’t fix it, they will break. Good old code can be used, but it should never be neglected.